ChatGPT Health is here, and it’s trying to become your “health information hub.”

On January 7, 2026, OpenAI announced ChatGPT Health, a dedicated area inside ChatGPT designed specifically for health and wellness conversations. The headline feature is simple (and honestly… kind of wild): you can connect your medical records and select wellness apps so ChatGPT can respond using your personal health context, not generic internet vibes.

OpenAI’s framing is consistent throughout the announcement: this tool is built to help you feel more informed, prepared, and confident, while supporting, not replacing, clinical care. It’s not meant for diagnosis or treatment.

And if you’ve ever tried to piece together your health story across 14 portals, 6 apps, a random PDF, and that one lab that got faxed into the void… you already know why this is appealing to some patients.

What ChatGPT Health does (in plain English)

1) It creates a separate Health “space” inside ChatGPT

Health lives in its own compartment: your Health chats, files, connected apps, and “memories” are stored separately from your regular ChatGPT activity. OpenAI says Health has separate memories, so health info doesn’t flow back into non-Health chats.

2) You can connect records + apps to ground answers in real data

OpenAI says you can connect:

- Medical records (via a partner network in the U.S.)

- Wellness apps like Apple Health, MyFitnessPal, and Function (availability depends on region/device; Apple Health requires iOS)

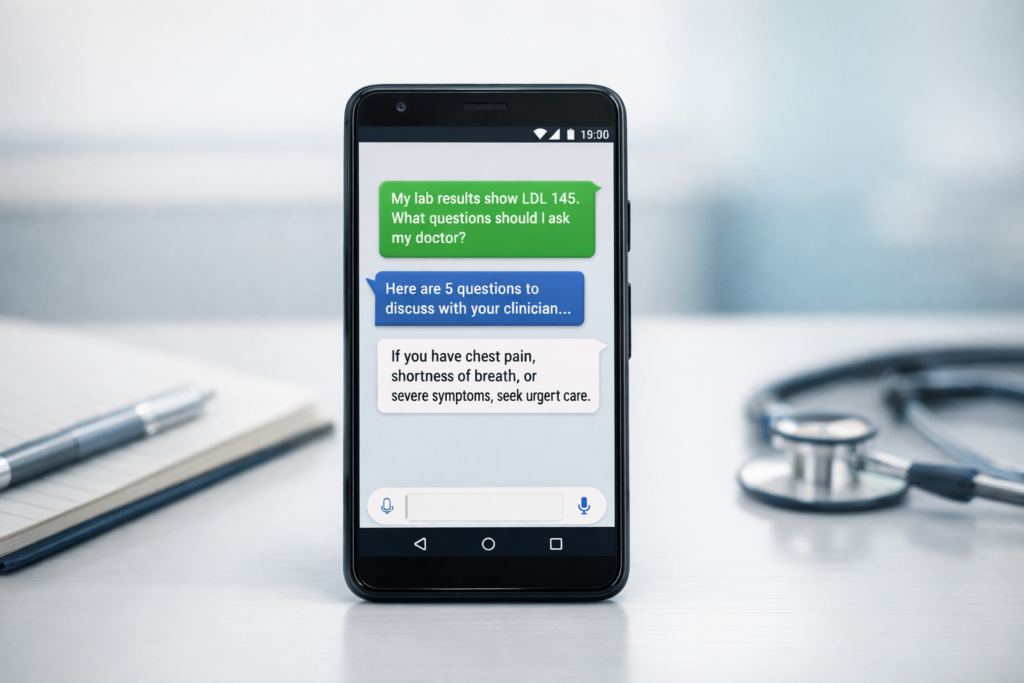

This grounding is the point: it’s not just “what is LDL cholesterol?” It’s “help patients understand their LDL trend over time and what questions to ask clinician.”

3) It’s rolling out gradually

OpenAI described early access via a waitlist and noted geographic limitations (initially outside the EEA/Switzerland/UK) and that some integrations are U.S.-only.

Privacy & security: what OpenAI claims (and what you should understand)

OpenAI says ChatGPT Health includes additional protections such as purpose-built encryption and isolation, and that conversations in Health are not used to train foundation models. They also emphasize user controls like deleting Health memories and managing app access.

Now, here’s the adult part of the conversation: privacy isn’t just “what a company says,” it’s also “what laws apply.” Multiple commentators have pointed out that consumer AI tools generally aren’t automatically regulated like your doctor’s office under HIPAA, which is why some experts urge caution before uploading sensitive records.

So yes, OpenAI built privacy features. But patients should still treat this like any other digital platform that handles sensitive info: use the controls, minimize what you share, and be intentional.

Pros and cons for patients

Pros for patients

1) Less confusion, more clarity

If you’ve ever stared at a lab portal like it was written in ancient hieroglyphics, this is where ChatGPT Health shines: translating results, explaining terms, and summarizing patterns using your actual data context.

2) Better appointment prep

OpenAI explicitly positions Health for prepping questions, understanding what changed, and walking into visits with a tighter story, which can help patients advocate for themselves.

3) A single “hub” for scattered health info

Records, PDFs, app stats, notes… brought into one place and made searchable through conversation. That’s a real quality-of-life upgrade.

Cons (and real risks) for patients

1) Over-trust is the danger zone

Even if the tool is helpful 80–90% of the time, the 10–20% matters when someone is deciding whether something is urgent. A Nature Medicine evaluation raised concerns about unsafe triage behavior in a structured stress test, including missed emergencies in some scenarios. That’s not a “cancel the product” statement, it’s a “don’t treat this like a clinician” reality check.

2) Privacy tradeoffs

OpenAI emphasizes protections, but experts still warn consumers to think carefully about uploading sensitive records and how consumer platforms fit into existing health privacy expectations.

3) Anxiety spiral potential

Wearables + labs + constant interpretation can turn into over-monitoring. If you’re already prone to health anxiety, an always-available explainer can be a gift… or gasoline. (That’s not unique to OpenAI, it’s a “humans + data” issue.)

Pros and cons for healthcare providers

Pros for providers

1) More prepared patients

When patients understand their results and show up with focused questions, visits can be more productive, less “what does this word mean,” more “here’s what I’m noticing, do you agree?” That’s a win.

2) Potentially better adherence

Patients who understand the “why” behind a plan are more likely to follow it. Tools that rewrite instructions in plain language can help with that (especially after visits).

3) Better longitudinal storytelling

If patients use it responsibly, they can bring cleaner summaries of trends (sleep, activity, nutrition, symptoms) rather than chaotic screenshots.

Cons for providers

1) “AI said…” conversations

You may see more visits where the first 5 minutes is undoing a misunderstanding or re-framing a non-urgent issue that the AI escalated (or, worse, failed to escalate). The recent structured triage critiques are a reminder that this is not hypothetical.

2) Workflow and liability ambiguity

Even if providers aren’t using the tool directly, patients may make decisions influenced by it. That can create friction, extra messaging volume, and questions about responsibility.

3) Equity gaps

Rollouts, device requirements (e.g., Apple Health on iOS), and digital literacy can widen the “who benefits” divide.

How to use ChatGPT Health responsibly (a quick checklist)

This is the part where we “stand on business with patient advocacy” and keeping people safe.

- Use it for organization + education, not diagnosis.

- If symptoms feel urgent or scary: don’t ask AI first, seek real care.

- Limit what you upload (minimum necessary).

- Turn off / clear memories if you don’t want ongoing context stored.

- Bring summaries to your clinician as a starting point, not a conclusion.

Bottom line

ChatGPT Health is aiming to be the middle layer between messy health data and real healthcare, the explainer, organizer, and prep coach. For patients, that can be empowering. For providers, it can mean better-prepped visits, but also more cleanup if people treat AI like a triage nurse.

The truth is both can be true at the same time: this can help a lot, and it can also cause harm if misused. I always encourage marginalized patients to advocate for themselves, so I can see ChatGPT Health being supportive, especially when you pair good tools with good judgment.

If you’re a patient: use this to prepare, not to self-diagnose.

If you’re a provider or health leader: start thinking now about patient education, “AI output” visit workflows, and what guardrails your org wants to recommend. If you want to get started download this free HIPAA complaint prompt checklist to help you prepare for potential patient facing questions from chatgpt health.

Leave a Reply